You are born private.

Your thoughts are private.

You are your thoughts.

Data is the reflection of your thoughts.

Systems ought to make your data private

Right when your data is born

Until deleted by you

Everywhere.

Protect your data.

Protect your thoughts.

Protect you.

Everywhere.

That’s NUTS.

CyberSecurity

IoD™: The Internet of Data™

By Yoon Auh, Founder of NUTS Technologies, August 27th, 2022.

The Internet of Data™ (IoD™) enables direct access to data across any network. Accessing data directly is possible when data has at least two characteristics: identification and privacy.

Identification of Data

Identification of objects is conventionally implemented within narrow scopes such as VIN, SSN, cell number, MEID, MAC, IPv4, IPv6, etc. For IoD, any data is eligible for permanent identification using a Practically Unique ID (PUID) by any capable device.

Identification of data is as narrow or broad as required and easily implemented with an identifier: the larger the identifier, the bigger the possible universe of data. A large enough identifier can identify the data across space and time. The size of the data identifier can start small and grow as needed; as implemented, this identifier is defined as the NutID. NutIDs are unstructured identifiers to maximize anonymity; this has the implied consequence of making brute force guesses very expensive for an identifier to a piece of data.

Privacy of Data

Privacy of data is conventionally controlled through access gateways and obfuscations (ciphering), each of which present numerous technical challenges when done at scale. To secure data for IoD, it is convenient to have a compact, fine grained, access control system that is cryptologically implemented as data; we define data secured by a compact, independent, portable access control system as Zero Trust Data (ZTD). Other equivalent terms for ZTD are “Secure Container” and “Security at the Data Layer”.

A Secure Container implemented as an encapsulation can be used to envelope the payload to accommodate a wide variety of object and file formats. Within the secure container, any metadata is also protected (immutable).

We define a simple nut container as a secure container with an immutable NutID as metadata and the data to be protected as the payload where the access controls are expressed as sets of cryptographic keys configured into progressively revealing data structures.

Thus, data in a nut can be accessed directly by any key holder(s) on the Internet of Data.

Independent Data

Data in a nut is independent data. An IoD ecosystem can provide transport and locate services for independent data across any network. The nut can be addressed directly by its NutID, further, the nut can address other nuts by their respective NutIDs; therefore, a NutID is a permanent reference to a nut in contrast to impermanent URL paths. In essence, the secure container protects and identifies its payload. A nut with a payload of NutIDs is an example of data directly addressing other data.

It is nontrivial to store and forward URLs by other web servers, whereas a nut can be handled by any relay mechanism due to its intrinsic security and portability. URIs and URLs are ever changing and may not be the same the next time you visit them. Documents not visible to web searches are very difficult to track down. Document authentication is even more difficult. In contrast, a NutID of a document will never change and references the actual document which will self-authenticate upon presentation of a valid key.

Independent data in a nut protects its payload and only the key holder(s) can easily access it; therefore, a nut can be safely stored anywhere on the IoD. Expressed as a generic file format such as JSON-base64, a nut is independent of most Operating Systems, File Systems and Cloud Systems. Getting a copy of a random nut is not enough to access its contents, one must present a valid credential in the form of cryptographic key. Since the access controls for the nut are embedded within its container material as cryptographic data elements, the payload is consistently protected in any environment independent of reference monitors.

Key Management

A secure system requires the safekeeping of secrets such as cryptographic keys in a systematic way on behalf of the user; a nontrivial problem. In the evolving world of data security, the ownership of cryptographic keys is an important factor in establishing the ownership of ciphered data. Both concerns can be addressed effectively and simply within a IoD implementation using nut containers.

IoD as implemented by the NUTS ecosystem provides each user installation with a key management system (KMS) built on nut containers. Since a nut identifies and protects a payload of any storable digital data, a cryptographic key or digital credential stored as payload in a nut is private and an identified in a universal way.

A nut container can be configured with an arbitrary number of keyholes each of which is identified by a NutID (or within this context, a KeyID). This presents the raw building blocks to construct a secure, robust and modular KMS using nuts as key carriers: a simple, elegant, logical and massively scalable design.

Conclusion

Our research shows that when properly engineered, IoD can provide an individual user with features often associated with the most sophisticated IT organizations such as hybrid cloud data management, data resiliency, ransomware mitigation, insider threat mitigation, automatic backup, hot backup, secure data sharing, automated synchronization, cipher agnostic cryptography, on device key management, and key ownership. All of these features in one ecosystem in an integrated fashion expressed as protected, identifiable data storage units.

Conventional approaches may provide most of the features listed above but may require many solutions to be configured simultaneously by knowledgeable people with integration as an secondary concern. Insider threat mitigation is only attempted by organizations with deep pockets and the need whereas the NUTS ecosystem delivers Insider Threat mitigation within every nut container in an independent way; the epitome of Zero Trust Data.

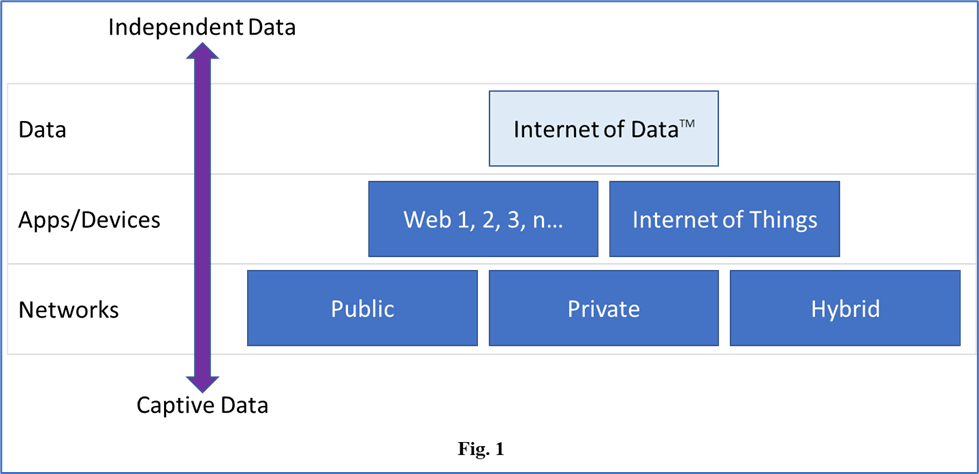

The Internet of Data establishes a new abstraction layer for the way we can interact with data and the way data can interact with other data (Fig. 1). IoD puts forth an environment where Operating Systems, File Systems and Networks are commoditized and your Data is prioritized.

In all honesty, does any user authenticate into a system for the pure pleasure of logging into a system? No, because, in the end, it’s all about the Data.

You’re Invited to our Annual NUTS Technologies DC CyberWeek Event!

We hope you can join us this coming October 22nd for our annual DC CyberWeek showcase.

Come on down, grab a cup of coffee, and learn a bit more about the groundbreaking developments happening at NUTS Technologies.

This year’s event will feature:

• Structured Cryptographic Programming…

• Structured Data Folding with Transmutations…

• Quantum Resistant Cryptographic Framework…

• and DNA Inspired Data Centric Design

We are also excited to announce this year’s presentation will be accompanied by a demonstration of the NUTS prototype! We look forward to walking you though how NUTS implements object level controls of security at the data layer and beyond…

Event Details:

Date: October 22nd, 2019 | 9:00 – 11:00 am

Venue: DLA Piper Building, 500 8th street, NW, Washington, DC 20004

Price: This event is FREE to attend but seats are limited, so register today to reserve your spot!

Event Link: “Implementing Object Level Controls for Security at the Data Layer”

https://2019dccyberweek.sched.com/event/Kr9g/implementing-object-level-controls-for-security-at-the-data-layer?iframe=no&w=100%25&sidebar=yes&bg=no

If you have an interest in data Security, Privacy, and ‘Need to Know’, you won’t want to miss this event. We look forward to seeing you there!

All the best,

NUTS Technologies Team

info@nutstechnologies.com

www.nutstechnologies.com

Upcoming Event – NUTS Demonstration at DC CyberWeek 2019

Don’t miss your chance to witness the first public unveiling of the NUTS beta demonstration at DC CyberWeek 2019 in Washington D.C. Tuesday Oct 22nd @ 9AM,

“Implementing Object Level Controls for Security at the Data Level”

Paradigm Shift: Thomas Kuhn defined the term to describe the periodic upheavals in the progress of scientific knowledge. Can you recognize a paradigm shift if you saw one?

The title of the presentation is directly from an active DoD RIF. We will show you what that future looks like in a working demo, and the future lands on a Tues morning in October. From data structures to custom protocols, all with security integrated organically. What does that last statement mean? What does such a thing look like? How does it function? How can it be utilized?

We are presenting in the heart of D.C. at the DLA Piper building. Refreshments and snacks will be served. Come hungry in mind and body. You won’t be disappointed. Bring your colleagues.

You can register for the event by clicking on the link below

Regards,

NUTS Technologies Team

DC CyberWeek 2018 thank you!

The NUTS Technologies team would like to thank CyberScoop for organizing DC CyberWeek. A special thanks to DLA Piper’s Jim Halpert, Diane Miller, and Catherine Tucciarone for providing us a great venue to host the NUTS presentation.

International Conference of Data Protection and Privacy Commissioners

The NUTS Technologies team would like to thank Mr. Giovanni Buttarelli and his team for hosting the 40th International Conference of Data Protection and Privacy Commissioners. We look forward to the next conference!!

We are back at DC CyberWeek 2018!!

![]() NUTS Technologies is happy to announce that we were invited back to host at DC CyberWeek 2018.

NUTS Technologies is happy to announce that we were invited back to host at DC CyberWeek 2018.

Register now for ‘The God Complex: Temptations of the Forbidden Fruit in the Digital Garden’ on Tuesday Oct 16 at the DLA Piper Building, Washington DC.

Event page http://sched.co/G8Fq

General conference information can be found here

Data in the future becoming self aware?

A friend pointed this video out (video link below) of CTO Mark Bregman speaking at the NetApp INSIGHT 2017 in Berlin Germany. Mr. Bregman sees data in the future becoming self aware, and the need for architecture in which data drives the processing.

What Mark is actually describing is called “Data Centric Design” and NUTS technologies. He further states that “this is not a near term prediction, this is not one for next year. Because it requires rethinking how we think about data and processing….”.

At NUTS Technologies, we’ve been busy architecting the future of data and we are already implementing it.

-NUTS Technologies Team

#NetAppInsight #DataDriven #DataCentricDesign #NUTSTechnologies

To rest, or not to rest, that is the question

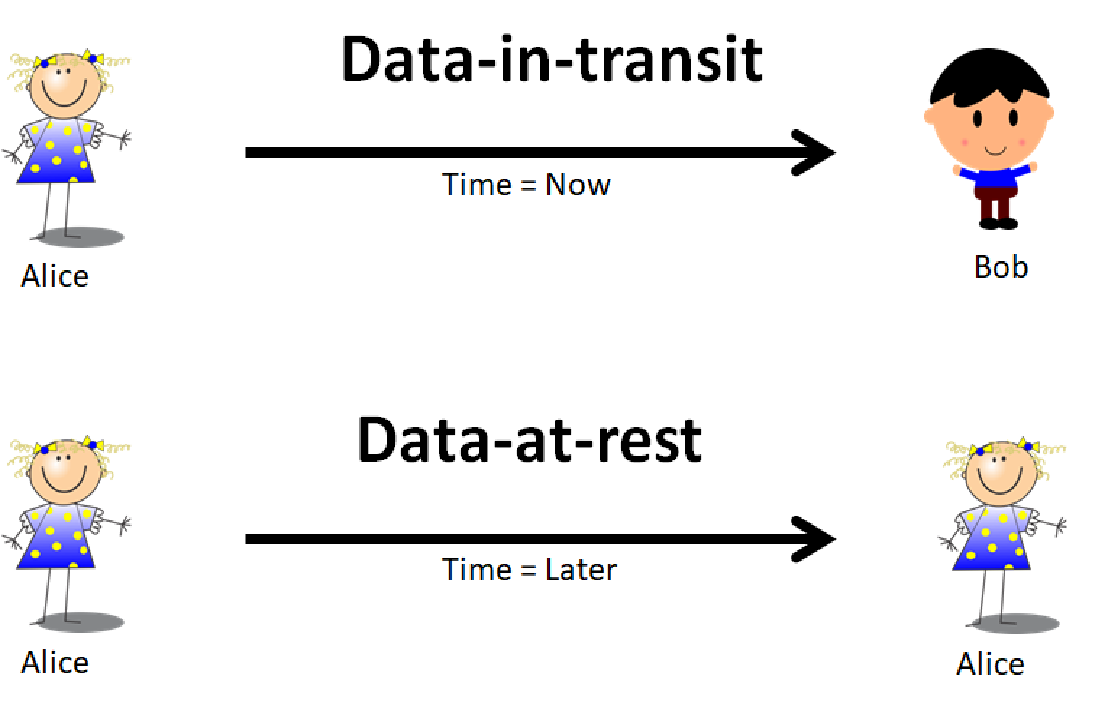

In computer systems, data that is stored onto static storage such as a flash drive or hard drive is referred to as “data-at-rest”. In contrast, data that is sent from point A to point B such as chat messages or network traffic is referred to as “data-in-transit”. The mindsets and toolsets that address these two states of data are very different especially when it comes to securing the data.

Data-at-rest techniques usually involve some form of encrypting the data prior to saving it onto the storage mechanism. This is usually called file encryption. Data-in-transit techniques usually rely on utilizing secure protocols which are methods of creating a secure pathway between the two endpoints thus anything sent between the two points are deemed secure. Nowadays, there are hybrids of these methodologies called End-To-End Encryption (E2EE) where messages may be encrypted prior to sending between the two points via a secure communication session. Today’s E2EE solutions offer some semblance of security but are often non-standard, hard to integrate, hard to manage and/or fall short of securing both states of data in a seamless, cohesive fashion.

In Data Centric Design (DCD), there is only one state of data: transmission. Data is generated, transmitted, and then consumed. The NUTS paradigm augments that DCD sequence to: data is generated, secured, transmitted, authenticated, and then consumed.

Storing data is an act of transmitting the data to the future.

For example, to perform data-in-transit securely usually requires two participants, Alice and Bob, to form a communications channel between them using a secure protocol and move data through the channel at time TNOW. When this is done properly, Alice can send Bob a message in near real-time securely. If we replace Bob with Alice and change the time to TLATER, this can become data-at-rest: Alice is securely sending a message to her future self.

Why does that matter? It simplifies what is traditionally considered two separate methods of securing data down to one unifying view. Thus, we are left to solve only the single problem of securing data for transmission. In this definition, the distinctions between data storage and transmission are blurred to mean the same thing, it’s just a matter of timing. In the TNOW case, the consumption of the data is done nearly instantaneously by Bob, but in the TLATER case, the consumption of the data is done at a later time by Alice. The example can be expanded to allow Bob or anyone else to consume the transmitted data at a later time.

| Time = TNOW | To Alice | To Bob |

| From Alice | Data-in-transit | Data-in-transit |

| From Bob | Data-in-transit | Data-in-transit |

| Time = TLATER | To Alice | To Bob |

| From Alice | Data-at-rest | Data-at-rest |

| From Bob | Data-at-rest | Data-at-rest |

A functional expression:

transmit(m, s, ts, r, tr)

where

m message

s sender

ts send time

r receiver

tr receive time

therefore

send_message(m, Alice, Bob) ≈ transmit(m, Alice, t0, Bob, t0)

save_to_disk(filename, m) ≈ transmit(m, Alice, t0, filename, t0)

read_from_file(filename) ≈ transmit(m, filename, t1, Alice, t1)

There are many ways to secure messages before transmission but very few offer a secure container that can be used for both states of data in a consistent, simplified and independent manner. What I’ve deduced over the years is that many problems that we have with our digital systems can be traced back to inadequately designed data containers. NUTS provides the technology to solve these inadequacies. In a later post, we’ll examine concepts called Strong and Weak Data Models.

This illustrates a core technique that Data Centric Design applies to problems big and small regardless of technical domains: root cause analysis. Finding the root cause of some problems may require you to re-frame the questions with a new perspective so if you solve the root cause then many of the symptoms never appear. The hard part is collecting a set of symptoms that appear unrelated and then looking for possible relationships.

Last week, DC CyberWeek presented very informative events, and many opportunities to network with some very smart people in the cybersecurity industry. The CyberScoop folks did an incredible job of organizing the whole affair. My deepest thanks to Julia Avery-Shapiro from CyberScoop for accepting our event idea, and for guiding us through hosting our first ever cyber security event. I cannot forget our attorney Jim Halpert for graciously offering the use of their offices at DLA Piper, and for the coordination wizardry of Susan Owens. I hope the folks who attended were rewarded with new information and ideas from our presentation.

We will be hosting a DC CyberWeek event!

Join us in Washington D.C. in 2 weeks.

Register now for Socratic Dialogue: The God Key Problem on Oct 17 at CyberScoop’s DC CyberWeek: http://bit.ly/2tNjT42

Event page http://sched.co/CUVI

This will be an old school discussion on The God Key Problem and its solutions.